2025 AAAS Fellows at UC San Diego

TILOS Chips team co-lead Farinaz Koushanfar is one of eight UC San Diego faculty elected 2025 Fellows of the American Association for the Advancement of Science (AAAS). Congratulations to all!

Congratulations to Sean Gao, recipient of a Best Undergraduate Teacher Award!

TILOS Chips and Foundations team member Sicun (Sean) Gao was honored with a 2024-2025 UC San Diego Jacobs School of Engineering Best Undergraduate Teacher Award.

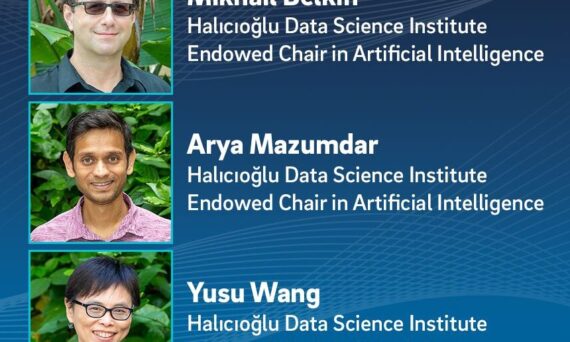

Congratulations to HDSI’s newest Endowed Chairs!

TILOS Director Yusu Wang, Deputy Director Arya Mazumdar, and Foundations team member Mikhail Belkin are the newest Endowed Chairs in AI and Data Science at UC San Diego’s Halıcıoğlu Data Science Institute, the administrative home of TILOS.

Congratulations to Nisheeth Vishnoi for his election to the 2026 Class of IEEE Fellows!

The 2026 IEEE Fellows Class acknowledges Vishnoi “for contributions to algorithms, optimization, and fairness in decision-making.”

UC San Diego is Strengthening U.S. Semiconductor Innovation and Workforce Development

Andrew Kahng is part of several major national initiatives that together are transforming how future engineers learn to design and build computer chips. His work is helping UC San Diego become a driving force in the nation’s growing semiconductor innovation ecosystem.

Dr. Henrik Christensen Discusses the Future of the Global Robotics Industry

An interview with Dr. Henrik Christensen, TILOS Robotics team co-lead and Distinguished Professor of Computer Science at UC San Diego, about the “marriage” of AI and physical automation. Christensen explains why the market’s fixation on sci-fi concepts has created a disconnect, leaving practical robotics companies poised for growth that is not yet priced in. The […]

Congratulations to Rose Yu, recipient of the Samsung AI Researcher of the Year award!

UC San Diego professor and TILOS affiliate faculty member Rose Yu is one of three winners of the 2025 Samsung AI Researcher of the Year award! The winners were recognized at the ninth annual Samsung AI Forum, held September 15-16, 2025 in Yongin, South Korea.